Pearson

Field Research

Updated assessments provide the most accurate results

Pearson Assessments provides reliable educational and psychological assessment tools that are used every single day by professionals across various fields of psychology, speech therapy, and more. As the leading creator of standardized assessments, our team is committed to timely updates that generate quality and diverse assessments, and we work diligently to bring you new and updated assessments each year.

Quick links:

Benefits | Data Collection Opportunities | Become a partner | What to expect

Your data is the backbone of every update

The Field Research team within Pearson Assessments is responsible for collecting the required data for our assessments. Field Research must ensure that the data for each assessment is of the highest quality. We equip professionals with the tools and support required for accurate, diverse, and timely data collection. Our focus on quality data collection is an integral part of the test development process to enable Pearson to create reliable tools for obtaining clinical diagnoses, determining individual education plan (IEP) eligibility, and improving overall quality patient care.

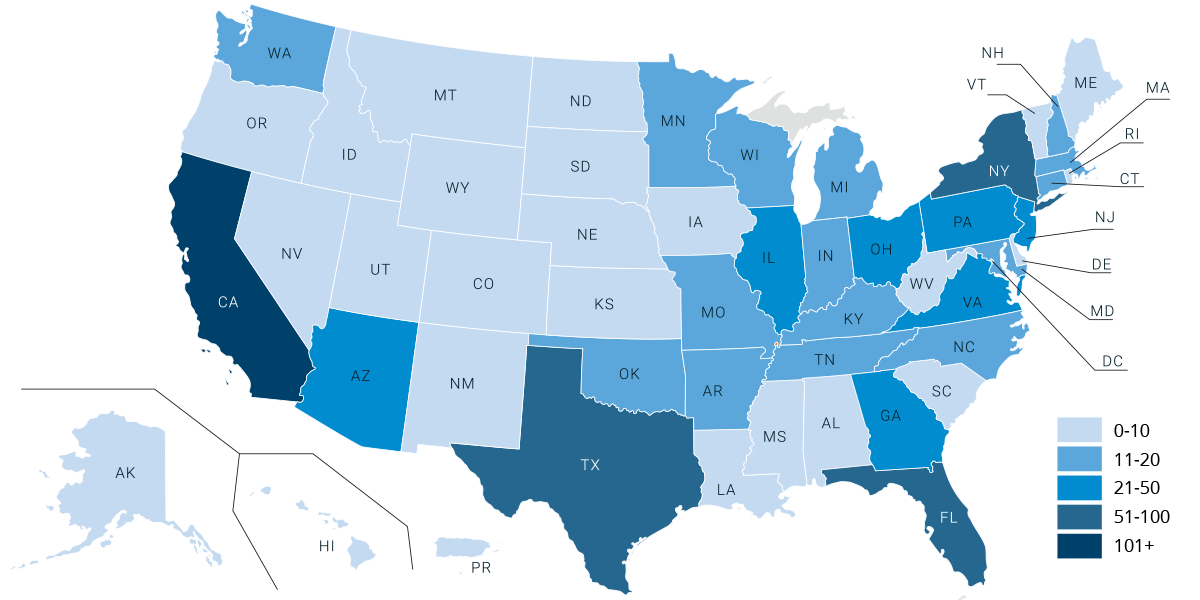

Currently, we partner with over 900 qualified professionals across the United States to collect this data! These professionals serve as Field Research Examiners during data collection opportunities. As part of the test development process, Examiners utilize their professional skills to administer research edition assessments to collect data for research purposes. Examiners are asked to recruit individuals from their personal and/or professional networks to participate in our research. Examiners, you fill a vital role in ensuring our assessments represent demographic, ethnic, and socioeconomic diversity. Register to become a Field Research Examiner and add your community to our pool of more than 19,000 candidates that contribute data for our assessments! The populations you serve need representation!

Partnering with Field Research

Professionals serving as Examiners partner directly with Pearson throughout the entirety of a data collection project. Examiners may work during or outside their professional work setting to recruit candidates to test, gather candidate demographic information and administer research edition assessments.

Population representation

During data collection projects, the Field Research team works hard to ensure that every assessment includes demographic, ethnic, and socioeconomic diversity. Our goal is to collect data from every region of the country and that we have diverse representation of ethnicities and socioeconomic status. As Examiners, you can play a pivotal role in this effort. By participating with us, you are guaranteeing that the populations in your communities are represented in the assessment updates. In addition, your access to special population groups is key to our data collection success!

Sneak peak

Registered Field Research Examiners get a first look at assessments before they are published! The research edition of an assessment is provided to Examiners to use during data collection projects. Examiners get to view new proposed test items, format changes, and artwork and Record Form changes and updates. As an Examiner, you are also provided with training and specific administration details for the new assessment and get hands-on administration experience. Gain expertise on future assessments!

Compensation

The Pearson Field Research team values your time and expertise. We understand that on top of your personal and professional responsibilities, you are using your time to ensure that our assessments include the highest quality data. In return for your participation in data collection projects, we provide compensation to you and to the candidates that you recruit and test. Field Research Examiners are compensated via check or direct deposit for usable cases. Test subjects or candidates are compensated via a digital gift card. *Compensation varies from project to project.

Collaboration

As a Field Research Examiner, you can provide feedback and insight to the assessment content development team and research directors. Collaboration does not stop there! Each data collection project is an opportunity to partner with individuals who are just as passionate about research as you are. Field Research data collection projects will provide collaboration opportunities with the Field Research team! Q&A sessions, forums, webinars, and panel discussions are great opportunities to provide valuable insights that will help us ensure our assessments remain relevant for your professional use.

Field impact

By participating in data collection opportunities, you will make a significant impact in your field! Your partnership with Pearson Field Research will support your colleagues as they use these assessments for many years to come. With the help and expertise of Examiners, Pearson Clinical can continue to publish high quality, relevant products to the market.

Current and Upcoming opportunities

- Clinical Evaluation of Language Fundamentals®, Sixth Edition (CELF®-6)

- Wechsler Intelligence Scale for Children®, Sixth Edition (WISC®-6)

- Vineland™ Adaptive Behavior Scales, Fourth Edition

Register

The first step to being a Field Research Examiner is to register for your Examiner Account within our Field Research Portal. The portal is where you will upload your payment information and preferences (W9, Direct Deposit, etc.), give your contact information for important updates, and keep track of your candidates’ assignments! Once logged in, you will want to keep the Portal bookmarked, and visit it often to stay up to date on any new requirements or assignments. The Portal is also where you will send and collect consent forms and questionnaires for your candidates.

Recruit Candidates

A critical part of obtaining quality data during standardization is ensuring we have candidates to select from diverse backgrounds. Actively recruiting eligible candidates in your community is key! Our greatest need are candidates who are typically developing with no diagnoses. Our Examiners find candidates through talking with friends and family, posting on social media, or connecting with sites within their area. Depending on the assessment, some clinical populations may be needed as well. Your connection to local clinics will be pivotal in recruiting these needed candidates. Our Field Research team will provide flyers and support to help you through your recruitment journey! Once you recruit candidates that are interested, you will add them to your portal account as soon as possible, so that the Field Research team can review them for assignment!

What is provided

When you and your candidates are selected to participate in a specific data collection project, all materials needed to complete testing and to send completed tests to Pearson will be shipped directly to you in a “kit.” The kit generally includes all test administration procedures, test stimulus books, test protocols and response booklets (if required), any additional administration materials such as manipulatives or response booklets as well as envelopes for returning used protocols. Additional testing materials will be provided as needed throughout the data collection effort. The Field Research team may also provide additional support materials such as flyers that can be used for recruiting, etc.

Feedback on test administration is provided from both the content development team as well as the Field Research team to ensure that tests are administered according to strict standardization procedures. It is paramount that these specific administration procedures are followed to guarantee Pearson Clinical has quality data to use for standardization.

Complete Testing

Your involvement is critical to the success of our data collection projects, and we value your participation! When qualified candidates are assigned to a project, candidate assignments will be visible in the Field Research Portal and will have a due date listed. These due dates are important to keep the project moving forward and ensure that we gather accurate and valid data. The Field Research team will provide you with all the necessary tools (i.e., video tutorials, administration guidelines) to support you every step of the way! Using the return envelopes in your test kit, completed protocols will be mailed to Pearson from your nearest UPS location. Once all testing for a data collection project is complete, our team will reach out to have you return your kit and any unused materials. Keep an eye on your portal account to ensure you see the alert when the next project you are eligible to participate in begins!

Stay Connected

Examiners are expected to stay connected with our team throughout the data collection project. Our team may reach out requesting candidate availability for testing, confirming shipping address, or sharing updated candidate needs throughout the life of the project. Viewing and responding to emails in a timely manner is required to ensure administration of tests and assignment due dates are met. Keeping an open line of communication with your Field Research point of contact will streamline the entire process for you from assignment to payment!

Contact us, we are here to support you!

If you have any questions, please contact the Pearson Field Research Examiner Relations team.